How to install apache spark

How to shutdown master and slave Spark processes $ SPARK_HOME/sbin/stop-slave.sh $ SPARK_HOME/sbin/stop-master.shĮnd of the article, we’ve seen how to Install Apache Spark on Ubuntu. How to access python spark shell $ /opt/spark/bin/pyspark How to access Spark shell $ /opt/spark/bin/spark-shell

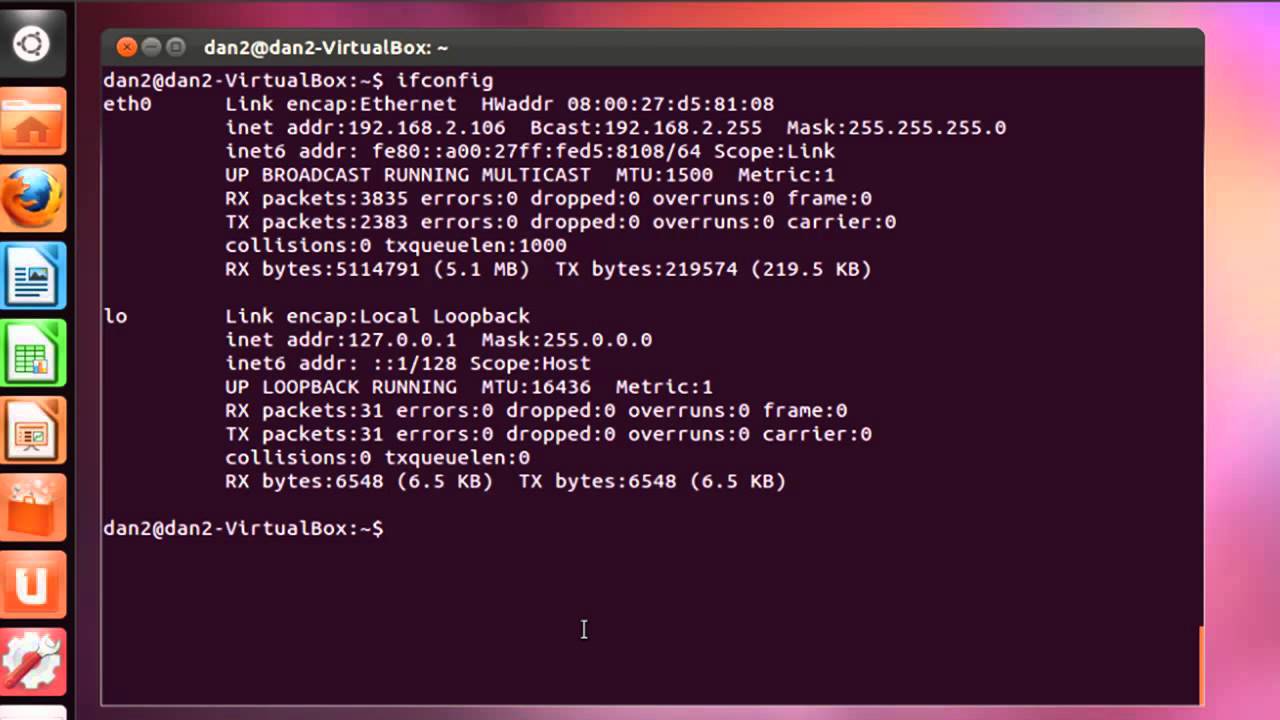

Once worker get started, then go back to the browser and access spark UI. Localhost: starting .worker.Worker, logging to you are not getting start-slave.sh file using locate command $ sudo updatedb $ locate start-slave.sh Sample Output: start-workers.sh password: Step 7: Start Spark worker $ start-workers.sh spark://localhost:7077 Sample Output: sudo ss -tunelp | grep 8080 The Apache and Spark command lines will run best with a Spark shell running. You should find this in the folder where Apache Spark is located. Starting .master.Master, logging to Step 6: Verify the TCP port $ sudo ss -tunelp | grep 8080 Log into your browser and choose server tab / configure. Traverse to the spark/ conf folder and make a copy of the spark-env.sh. curl -O Extract Spark tarball tar xvf spark-3.1.1-bin-hadoop3.2.tgz Move spark directory to /opt sudo mv spark-3.1.1-bin-hadoop3.2/ /opt/spark Configure Spark environment vim ~/.bashrcĪdd below line: export SPARK_HOME=/opt/spark export PATH=$PATH:$SPARK_HOME/bin:$SPARK_HOME/sbin Reflect or activate ~/.bashrc source ~/.bashrc Step 5: Start a standalone master server $ start-master.sh (On Master only) To setup Apache Spark Master configuration, edit spark-env.sh file. OpenJDK 64-Bit Server VM (build 11.0.11+9-Ubuntu-0ubuntu2.20.10, mixed mode, 4: Download Apache Spark on Ubuntu 20.10Ĭheck out for the latest Apache Spark version. OpenJDK Runtime Environment (build 11.0.11+9-Ubuntu-0ubuntu2.20.10) sudo apt install curl mlocate default-jdk -y Step 3: Verify Java version $ java -version java -version As of now we’ll install default Java on Ubuntu. Java package is a prerequisite to use Apache Spark. This will help users in simplifying graph analytics.

Apache Spark will include several graph algorithms.

HOW TO INSTALL APACHE SPARK UPDATE

sudo apt update Step 2: Install Java on Ubuntu 20.10 It is for enhancing graphs and enabling graph-parallel computation. It is recommended to update the system before installation of Apache Spark. Free Big Data Hadoop Spark Developer Course: utmmediumDescriptionFirstF.